When Nvidia showed off DLSS 5 at GTC 2026, the demo reel featured a character from Resident Evil Requiem whose face had been painstakingly sculpted by Capcom’s artists over the course of three years, complete with faint acne scarring, asymmetrical bone structure, and lighting designed to make her look unsettling rather than attractive. DLSS 5’s neural rendering smoothed all of it away in real time and replaced it with something that looked like a portrait mode selfie. The gaming community did not see a technical breakthrough; they saw an algorithm vandalising somebody else’s work.

CEO Jensen Huang called DLSS 5 “the GPT moment for graphics.” The community called it something very different. What followed was not a debate about frame rates or benchmarks but about something far more fundamental: who gets to decide what a finished game looks like, the artist who spent months crafting every shadow or the algorithm that decided to brighten them all?

So what is DLSS 5, why has it triggered an ai art controversy this intense, and what does the backlash actually tell us about the collision between computational power and human creative vision?

Key takeaways

- DLSS 5 is Nvidia’s real-time neural rendering system that adds AI-generated lighting and detail to games, going far beyond the upscaling of previous DLSS versions.

- Critics argue it overrides artistic intent, replacing deliberate, moody visuals with homogenised, AI-smoothed output.

- The AI bias concern is structural: training data skewed towards filtered internet images produces faces and textures that reinforce narrow beauty standards.

- The debate is not technical capability vs technical failure. It is a clash between trajectory arguments (“it will improve”) and values arguments (“even if it improves, I may still reject what it represents”).

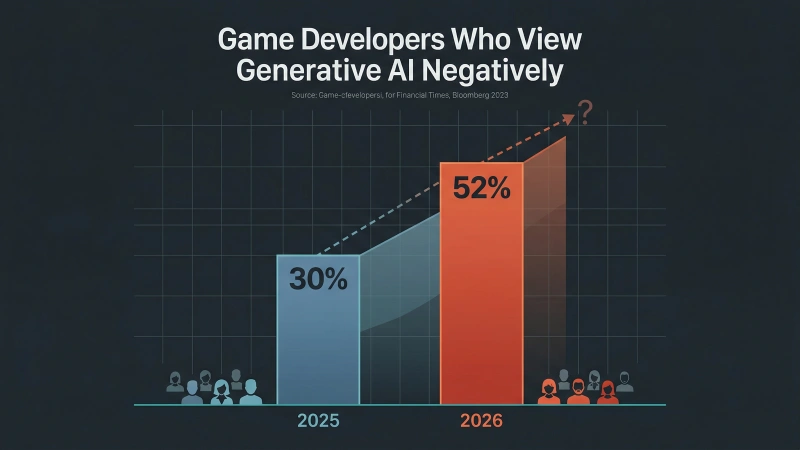

- Game developers are increasingly sceptical: 52% now view generative AI negatively, up from 30% a year earlier.

What is DLSS 5 and how does neural rendering work?

DLSS stands for Deep Learning Super Sampling. It is Nvidia’s method of using AI to predict what a high-resolution image should look like based on a much lower-resolution starting point. Instead of your GPU grinding through every pixel in 4K, it renders a smaller image and the AI rapidly fills in the rest.

The evolution from DLSS 1 to DLSS 4

Previous versions were primarily efficiency tools. DLSS 1 was blurry and mocked relentlessly. DLSS 2 introduced temporal feedback and became genuinely useful. DLSS 3 added frame generation, using AI to create entirely new frames between rendered ones, essentially inventing frames that the GPU never drew. DLSS 4 refined this with transformer-based models and multi-frame generation. Throughout this evolution, the core principle remained the same: make games run faster without sacrificing the artist’s intended visual output.

The evolution from DLSS 1 to DLSS 5: each generation added more AI capability, but DLSS 5 crossed a line from efficiency tool to creative override.

The evolution from DLSS 1 to DLSS 5: each generation added more AI capability, but DLSS 5 crossed a line from efficiency tool to creative override.

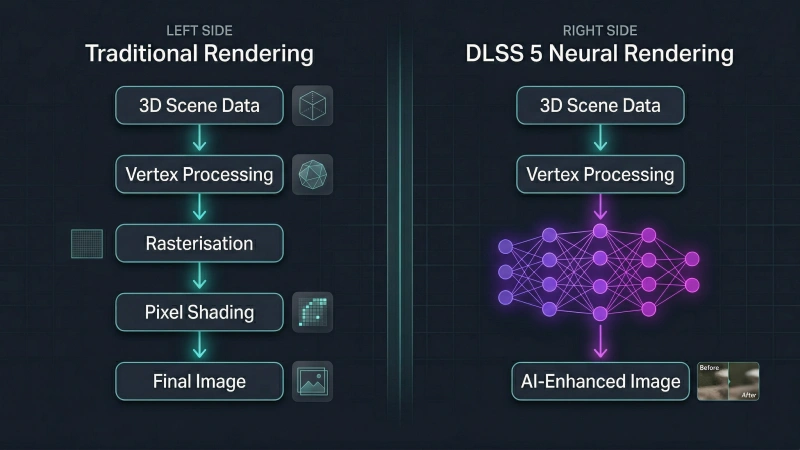

What makes DLSS 5 different

DLSS 5 breaks that principle. Announced at GTC 2026 and exclusive to the RTX 50 series GPUs built on Nvidia’s Blackwell architecture, DLSS 5 introduces what Nvidia calls “real-time neural rendering.” The distinction between frame generation and neural rendering matters. Rather than simply upscaling an image or generating intermediate frames, DLSS 5 uses AI to add photorealistic lighting, subsurface scattering on skin, and material detail that did not exist in the original render. It predicts what a scene should look like based on patterns learned from vast datasets of images.

Jensen Huang compared the significance to GPT and ChatGPT, calling it “the GPT moment for graphics.” The technology is scheduled for a fall 2026 launch, with confirmed support from Bethesda, Capcom, Ubisoft, and Warner Bros. Games. Titles including Assassin’s Creed Shadows, Hogwarts Legacy, Resident Evil Requiem, Starfield, and The Elder Scrolls IV: Oblivion Remastered are all slated for DLSS 5 support. Early demos required two RTX 5090 cards running simultaneously, though Nvidia is targeting single-GPU support at launch.

For a detailed technical demonstration, Digital Foundry’s coverage is worth watching.

But here is the catch. An efficiency tool that makes your existing game run faster is one thing. An AI system that adds visual information the artist never created is something very different. And that difference is exactly where this story gets loud.

How DLSS 5’s neural rendering pipeline differs from traditional game rendering. The AI adds detail that was never in the original render.

How DLSS 5’s neural rendering pipeline differs from traditional game rendering. The AI adds detail that was never in the original render.

Why gamers play, and why this matters

Before getting into the backlash, it helps to understand why this debate cuts as deep as it does.

Gamers do not, by and large, care whether every photon bouncing off a dungeon wall obeys the laws of thermodynamics. They are not looking for physics-accurate realism. They are looking for atmosphere. They want the artist’s deliberate vision: the moody lighting, the gritty textures, the imperfections that make a world feel authored and alive.

When a rendering algorithm overrides that vision in the blind pursuit of photorealism, the experience does not feel like an upgrade. It feels like a betrayal. The loss of human intent severs the emotional connection between the creator and the player.

DLSS 5 promises prettier games. But “prettier” and “better” are not the same thing, and the gaming community has made it very clear which one they actually value.

AI generated art and the DLSS 5 controversy

Within hours of the DLSS 5 showcase going live, gaming communities erupted. The reaction was not mild scepticism. It was visceral.

The immediate backlash

Critics compared the technology to slapping a smartphone beauty filter over a hand-painted masterpiece. Three years of meticulous shadow work, moody lighting, and deliberate grit, all instantly smoothed into a generic glow. One vocal critic called it “horrendous,” “a joke,” and “utter nonsense.” The backlash was immediate and relentless.

The complaints were forensic. Artists spend hours, sometimes days, getting the lighting in a scene just right. The shadows are deliberate. The contrast is atmospheric. The mood is unmistakable. DLSS 5’s AI brightened those shadows, flattened the contrast, and made everything look uniformly well-lit. In the words of one critic, the AI “crapped all over” months of painstaking lighting work.

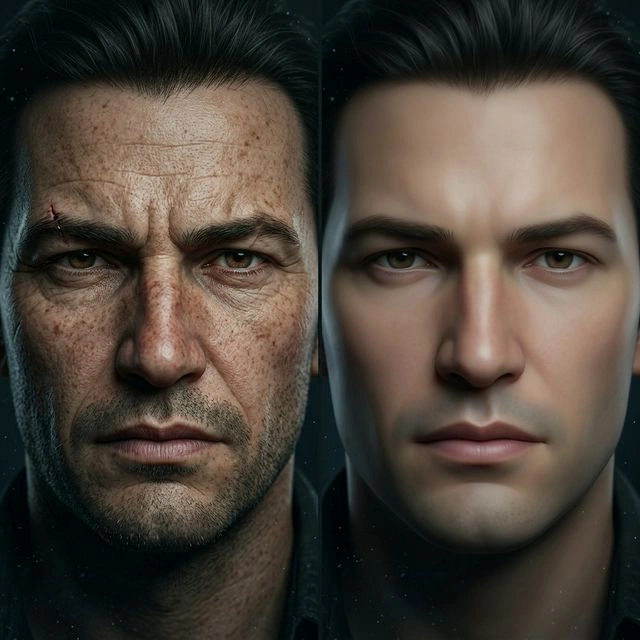

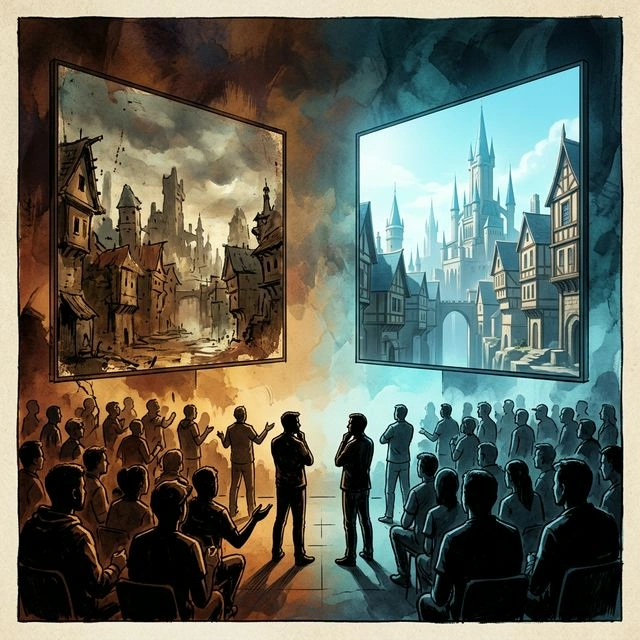

The face problem

Faces were worse. The AI replaced carefully designed, unique character faces with generic archetypes. Young women started looking identical. Older characters lost their scars, their asymmetries, the deliberate imperfections that gave them personality. It was like replacing a real face with a mathematical average of what a face “should” look like, and doing it at scale across an entire game.

"It's like replacing a real face with a mathematical average of what a face should look like — and doing it at scale across an entire game."

The face problem in action: deliberate character detail on the left, AI-smoothed homogeneity on the right.

The face problem in action: deliberate character detail on the left, AI-smoothed homogeneity on the right.

This brought up the most troubling concern for the industry. If AI fills in the visual details, why would developers invest in meticulous hand-crafted environments or character work? Game artists have openly questioned this, and the fear is real. The ai art controversy around DLSS 5 is not about whether the technology works. It is about whether AI in gaming production creates a structural incentive for cutting corners, one that slowly erodes the culture of craft. The same tension exists in AI content detection, where the line between human and machine output becomes increasingly difficult to draw.

Adding fuel, reports emerged that Capcom and Ubisoft developers reportedly did not know their games were being used for the DLSS 5 demo at GTC. This detail landed badly in a community already suspicious of how the technology was being pushed.

AI bias in DLSS 5’s training data

Beneath the surface-level complaints about lighting and faces, there is a deeper and more uncomfortable issue.

DLSS 5’s AI does not “know” what a human face looks like. It knows the mathematical patterns of the billions of images it was trained on. If those images are disproportionately filtered, airbrushed, and homogenised, that is exactly what the AI reproduces. Not because the code is malicious, but because the data is biased.

The data reflects the bias

A 2025 study published in Scientific Reports found that AI-generated faces consistently reinforce gender stereotypes and racial homogenisation. One debate participant put it bluntly:

"DLSS 5 is a perfect example of how AI data sets inevitably reinforce sexism and impossible beauty standards."

This is not theoretical. When Horizon Forbidden West launched, the protagonist was designed as a realistic warrior surviving in a post-apocalyptic wilderness. She had peach fuzz, sun damage, and normal facial proportions. Certain online communities complained she was not attractive enough and used AI tools to smooth her skin, thin her jawline, and add heavy makeup. Those fan edits were widely ridiculed at the time. But DLSS 5 now automates that same process, baked directly into the rendering pipeline. The AI defaults to painting “perfect” pixels over the deliberate, gritty textures the original artist created. The ai bias is not intentional. It is structural.

Training data is not a separate problem

One defender posed a thought experiment: what if a company deliberately trained their AI models on “average joes” instead of supermodels and Instagram filters? If you fed it strictly realistic data that did not impose impossible beauty standards, would the critics accept the technology? The response from the room was sceptical. Today’s AI is built on internet-scraped data that already contains those biases. Fixing the training set is a theoretical solution to a practical problem that no company has actually solved.

The parallel to other industries is hard to ignore. AI hiring software has famously discriminated against women and minorities, not because anyone wrote discriminatory code, but because the historical data it learned from contained those patterns. The AI looked at who got hired in the past, found a pattern, and replicated it. The same principle applies to visual rendering.

A UNESCO report from February 2026 projected that artists face a 24% revenue decline due to AI by 2028. The financial and creative impact is not on its way. It is already here.

This raised what one participant called “the most critical question of the entire debate”: can generative AI ever be truly neutral? Or is it fundamentally doomed to act as a mirror, reflecting and amplifying the prejudices, biases, and impossible standards of the internet data it scraped to exist?

AI bias in action: diverse input data enters the training pipeline, but the output defaults to a narrow, homogenised ideal.

AI bias in action: diverse input data enters the training pipeline, but the output defaults to a narrow, homogenised ideal.

The defence: why some believe DLSS 5 is being judged too early

Not everyone agreed with the outrage. At least one voice in the debate walked in like Homer Simpson backing slowly into the bushes, realising he disagreed with literally everyone else in the room, and said, “Actually, I think it looks fine.”

They had a point, or at least part of one.

The technical argument

The technical counterargument is that the underlying geometry and textures are being retained by DLSS 5, not overridden wholesale. The AI enhances the image rather than bulldozing it. And history supports this defence: DLSS version 1 was mocked relentlessly when it first launched. It smeared images. It looked blurry. People called it useless. It evolved rapidly and is now an industry standard that most gamers leave turned on by default.

The analogies were compelling too. Early 3D games in the mid-1990s were incredibly blocky and looked far worse than the polished 2D sprite art they replaced. People mocked early 3D. They said it ruined the artistry. It evolved, refined, and completely took over. VR, by contrast, was supposed to be the defining moment of 2015 but remains relatively niche eleven years later. So which path does DLSS 5 take?

Remember AI image generation tools a few years ago? Everyone mocked them because they gave people seven fingers and backwards thumbs. Then the technology corrected itself terrifyingly fast. Today, AI-rendered anatomy is practically flawless. Current DLSS 5 artefacts may vanish just as quickly.

Where the defence stumbled

But the defence had a rhetorical weakness. The statement “literally none of the artist’s work has been replaced” was too absolute. For artists, final appearance is the work. Not just the mesh, not just the albedo, not just the normal map. The final image is the point of the whole circus. If the lighting mood, facial response, skin treatment, and overall vibe are altered, then the artistic result has been altered, even if the source assets were not overwritten on disk.

"You are inspecting the mechanism. They are reacting to the meaning."

Even Jensen Huang’s own position shifted. At GTC, he told critics they were “completely wrong”. Days later on the Lex Fridman podcast, he softened considerably, calling himself “empathetic” to the concerns and admitting he does not love “AI slop” either, while insisting DLSS 5 is “content-controlled generative AI” that gives developers the final say.

But the room was not having it.

"Even if it becomes excellent, I may still reject what it represents and what incentives it creates."

The question of ai generated art quality is secondary. The real question is purpose.

Will AI replace artists, or just their choices?

The critics in this debate are not anti-AI. They said so explicitly. They do not hate machine learning as a concept. They recognise its utility. What they reject is this specific implementation: the one that overrides creative intent.

AI done right

And they pointed to examples of AI done right.

An AI tool called Beable, used in video production, can relight scenes without massively altering the physical appearance of actors. It understands the 3D space and enhances the lighting, but it does not try to redraw the human element. It respects the boundaries of the original footage.

Ray Reconstruction, used in Unreal Engine for upcoming titles like Crimson Desert, works like a hyper-intelligent foreman on a construction site. It does not build the house. It directs the workers on exactly which light rays matter most to the human eye, then cleans up the grainy image that results from ray tracing. It leaves final artistic control entirely in the developer’s hands. It does not replace textures. It does not alter lighting to fit an algorithm’s preference. It preserves artistic intent and helps the game achieve the exact result the artist already wanted, just more efficiently.

AI done wrong

DLSS 5’s generative override does the opposite. It steps in front of the artist and imposes its own aesthetic over the authored result. The source files are untouched on disk, but the image the player sees is fundamentally altered.

The distinction is clean. AI as invisible scaffolding, supporting human intent. AI as dictator, overriding it. The technology is the same. The control architecture is everything.

The same technology, two very different control architectures. On the left, AI supports human creative decisions. On the right, it replaces them.

The same technology, two very different control architectures. On the left, AI supports human creative decisions. On the right, it replaces them.

And when people ask will ai replace artists, the answer is not a simple yes or no. It depends on which version of AI wins. Pearl Abyss recently apologised after AI-generated placeholder art was left in Crimson Desert’s final release, which shows the laziness incentive is already producing real-world consequences.

The following table summarises the key difference:

| AI as scaffolding (Ray Reconstruction) | AI as override (DLSS 5 generative) | |

|---|---|---|

| Who controls the final image? | The artist | The algorithm |

| Does it change the artist’s intent? | No, it preserves it | Yes, it overrides it |

| Does it add new visual information? | No, it refines existing data | Yes, it generates new detail |

| Does it incentivise lower-effort art? | No | Potentially yes |

| Industry reception | Widely accepted | Heavily contested |

Is AI just a neutral tool? The microchip debate

The defender in the debate zoomed out to the broadest possible frame. They compared AI to the microchip. The same fundamental technology sits inside life-saving MRI machines and inside weapons of war. Often manufactured by the same companies. Should we refuse the medical equipment because the weapon exists?

To the defender, AI is just a neutral tool. What matters is who wields it.

The counter was sharp. A microchip is computationally a blank slate. AI is not. The massive technology companies pushing AI forward are not doing it to make video games look prettier. The primary driving forces are global data harvesting, large government contracts, and predictive surveillance infrastructure. Gaming, in this view, is a useful byproduct: something to normalise and fund a technology whose actual purpose is to extract, analyse, and predict human behaviour at scale.

"Focusing on how good AI is for rendering graphics is like following a carrot on a stick all the way to the slaughterhouse."

This reframes the AI in gaming debate entirely. It is not just about DLSS 5 or rendering. It is about the broader system AI is built within and what that system is designed to do.

AI vs human creativity: forecasts don’t defeat judgements

The most instructive part of the DLSS 5 row is not the technology. It is the complete failure of two sides to have the same conversation.

The trajectory argument

Side A argued trajectory. This is early. It will improve. The underlying artist work is still there. This kind of ML-assisted rendering is probably inevitable. Do not judge the category by version 0.9.

The values argument

Side B argued values. It looks bad now. It violates artist intent now. It encodes ugly social biases now. It incentivises bad industry behaviour now. Even if it improves, they may still reject the kind of thing it is.

These are not opposing answers to the same question. They are different questions wearing the same coat.

Side A was making a forecast. Side B was making a judgement. And forecasts do not defeat judgements. Saying “it is the future, so accept it” sounds like surrender dressed as pragmatism to anyone making a values-based objection.

A more productive framing might have been to separate three distinct questions: whether this specific implementation looks bad, whether generative image alteration is acceptable in rendering at all, and whether ML-assisted rendering as a broader category will become common. The first is probably true. The second is genuinely debatable. The third is very plausible. But lumping all three together made the conversation a brawl instead of a debate.

"You brought a technical-evolution argument to a values-and-authorship fight. Different battlefield. Wrong weapon."

This exact dynamic of the ai vs human creativity debate plays out across every creative field: music, illustration, writing, AI in web design, AI’s growing role in SEO, and film. Some analysts believe AI has already reached an inflection point comparable to the early internet. The trajectory camp argues capability. The values camp argues meaning. They talk past each other because they are running different tests on the same evidence.

As an outsider listening to the original debate, I found myself at roughly 60/40 in favour of the defender’s position. Not because the values-based objections are wrong. They are not. But because dismissing an entire technological category based on a rough first implementation seems premature when the history of rendering is full of exactly this pattern. The critics’ concerns about bias, incentives, and artistic override are valid and urgent. The defender’s point that those are problems to solve within the technology, not reasons to reject the technology outright, also holds weight. Both things can be true.

According to the 2026 GDC State of the Game Industry survey, 52% of game developers now believe generative AI has a negative impact on the industry, up from 30% just one year earlier. The values camp is growing.

Developer scepticism towards generative AI is rising sharply. The GDC 2026 survey showed 52% negative sentiment, up from 30% in 2025.

Developer scepticism towards generative AI is rising sharply. The GDC 2026 survey showed 52% negative sentiment, up from 30% in 2025.

The same tension applies to any field where AI touches creative work. In professional web design services and digital marketing services, the tools that succeed are the ones that support human creative decisions rather than overriding them. The pattern is consistent: audiences trust work that feels authored, and they can tell the difference.

The counter-perspective: is the criticism even consistent?

There is a fair challenge here that deserves an honest answer: is the criticism even consistent?

Artists used to agonise over baked lighting, manually placing static light sources for each scene, carefully controlling every shadow and highlight. Then dynamic lighting arrived. Then ray tracing. Both fundamentally changed how light behaves in a scene, sometimes overriding artistic intent in the process. Where was the outrage then?

If you accept ray tracing, which simulates light physics and changes how a scene looks, then why is DLSS 5, which also changes how a scene looks through AI prediction, a step too far? What is the actual line being drawn here? And is it being drawn consistently, or only when the word “AI” is attached?

A more grounded concern is facial consistency. If the AI renders a character’s face slightly differently each time it appears on screen, that breaks immersion in a way that inconsistent lighting does not. That is a practical problem, not a philosophical one.

The criticism may well be valid. But it risks being selectively applied only to technology carrying the AI label, and that selective application weakens the argument.

The question nobody can answer yet

Here is the question that sits at the bottom of the DLSS 5 debate, and nobody has a good answer for it.

Imagine five, maybe ten years from now. Generative AI rendering continues to improve until it is absolutely, indistinguishably flawless. Every face is perfect. Every light source behaves exactly as physics dictates. Every texture is photo-real.

Now imagine that players actually prefer it. They prefer the AI’s heavily altered, beautified, algorithmic version of the game over the human artist’s grittier, more deliberately imperfect creation.

If the audience actively chooses the AI’s flawless illusion over the human’s intentional work, does the original artist’s intent even matter any more?

If the audience wants the illusion, then who are we actually building these digital worlds for? The creator, or the algorithm?

The question nobody can answer: if the audience prefers the AI version, does artistic intent still matter?

The question nobody can answer: if the audience prefers the AI version, does artistic intent still matter?

There is no clean answer. That is exactly the point. And that is what makes the question of what is DLSS 5 so much bigger than a graphics card feature. It is a question about who owns the creative decisions in the things we build, play, and experience. It is the same question facing web designers wondering if their craft is dying and marketers navigating AI-driven SEO.

Conclusion: AI as a tool, not a dictator

DLSS 5 is not inherently bad technology. The raw capability behind real-time neural rendering is, by any honest measure, extraordinary. The problem is not what it can do but who gets to decide when it does it.

The backlash exists because Nvidia presented AI generated art output as a universal improvement, rather than an optional tool that artists and players could control. That framing turned a technical achievement into a creative imposition. A rendering system that respects artistic intent and gives artists meaningful control over the output would face a fraction of the criticism. One that overrides their work by default will continue to face all of it.

The broader lesson stretches well beyond gaming. Whether the subject is AI in web design, AI’s growing role in SEO, or AI-assisted content creation, the pattern is the same: AI works best when it supports human decisions, not when it replaces them. The moment it crosses from scaffolding to override, the pushback is swift and justified.

If you are thinking about how AI fits into your own digital strategy without losing the human element that makes your brand distinctive, our web design services and digital marketing services are built on exactly that principle. Get in touch and we can talk it through.

Frequently asked questions

What is DLSS and how does it work?

DLSS (Deep Learning Super Sampling) is Nvidia’s AI-powered rendering technology. Previous versions upscaled lower-resolution images to save GPU power. DLSS 5 goes further, using neural rendering to add photorealistic lighting and material detail in real time. It predicts what high-fidelity visuals should look like based on patterns learned from vast image datasets.

Is DLSS 5 the same as AI generated art?

Not exactly. AI generated art typically creates images from scratch using text prompts. DLSS 5 works within a game’s existing assets but alters how they appear on screen, changing lighting, faces, and surface detail through AI prediction. The distinction matters because the original artist’s work exists on disk, but the final image the player sees can be significantly different.

Does DLSS 5 have an AI bias problem?

Critics argue yes. Because the AI learns from internet-scraped images, it tends to reproduce the same beauty standards and visual homogeneity found in that data. This AI bias can override the deliberate, diverse character designs created by game artists.

Will AI replace artists in gaming?

Unlikely in full, but the concern is real. AI tools like DLSS 5 may reduce the need for certain types of manual rendering work while increasing the need for artists who understand how to direct and constrain AI output. The role shifts from creating every detail to supervising what the AI produces.

What is DLSS 5 explained simply?

DLSS 5 is Nvidia’s system that uses artificial intelligence to rebuild how games look in real time. It adds lighting and detail the original game did not render, aiming for photorealism. It has been criticised for overriding game artists’ intended visual style.

What is the difference between frame generation and neural rendering?

Frame generation (DLSS 3/4) creates new frames between rendered ones to boost frame rates. Neural rendering (DLSS 5) goes further by generating entirely new visual detail, lighting, and material effects that were never in the original render. Frame generation is about speed. Neural rendering is about altering appearance.